Healthcare AI Agent Readiness Taxonomy: The Compliance Blueprint for Enterprise Procurement

A deployment classification system for AI teams building or deploying healthcare AI agents — with the evidence pack enterprise procurement actually checks.

Who this is for

→ AI agent vendors building for enterprise healthcare procurement

→ Healthcare operators (health systems, payers) evaluating or deploying AI agents

→ AI product teams at platforms building healthcare-specific layers

What you get

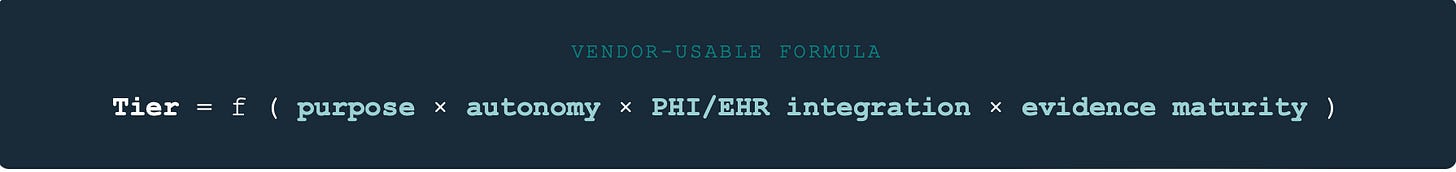

A Tier label (1–5) for your agent based on purpose, autonomy, and PHI/EHR scope — plus the evidence pack index procurement will check, and an EU AI Act risk overlay.

Most “AI governance in healthcare” content is frameworks without an operating layer.

This taxonomy is the opposite.

It’s a deployment classification system — built so AI teams working with healthcare AI agents can answer three questions enterprise procurement always asks:

What is this agent permitted to do?

What evidence do you have that it’s governed?

Where does your compliance posture break down at runtime?

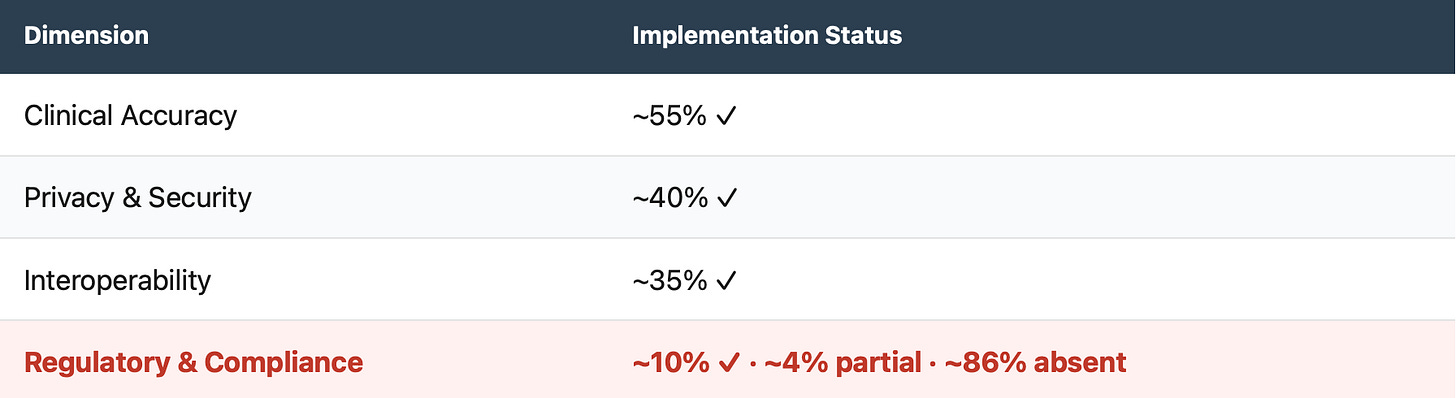

The research base makes the problem concrete: a 2026 IEEE Access taxonomy reviewed 49 published LLM-based healthcare agent systems (research papers documenting LLM-based agents in healthcare contexts) and found Regulatory & Compliance Constraints is the most underbuilt dimension — ~10% fully implemented, ~86% absent.

The reason is architectural. Agentic systems shift governance from “secure stored data” to governing real-time data transactions: tool calls, EHR queries, multi-step chains. HIPAA provides baseline safeguards (audit controls, access controls) — but it doesn’t specify agentic runtime governance: tool-call provenance, multi-agent chaining, or runtime policy enforcement. EU AI Act does. And enterprise CISOs and DPOs are already asking for it.

Compliance Gap in Published Healthcare AI Agent Systems

IEEE Access 2026 · 49 published LLM-based healthcare agent systems reviewed

The IEEE Access taxonomy defines compliance as evidenced — not asserted: documented lawful bases and consent, role-scoped access and retention, cross-border transfer controls, and formal risk assessment policies tied to technical safeguards. That’s the exact reason vendors lose deals at CISO/DPO review: they can demo intelligence, but can’t demonstrate auditable control.

The Core Idea: Tier = Permission-to-Act

This taxonomy does not rank model capability.

It ranks deployment permission — the level of clinical authority and system privileges an agent can be granted (authority × privileges × assurance), and the minimum evidence required to govern that permission safely.

SaMD/non-SaMD classification is an overlay driven by intended use at the function level — not by the model or the platform. An agent can have both operational and clinical functions; each must be classified per function.

Where purpose separates:

Operational / Administrative: scheduling, billing, intake ops, documentation — no medical-purpose claims

Clinical: functions intended for diagnosis, triage, or treatment decisions → SaMD-likely at the function level

The 4 Classification Axes

① Purpose (Operational vs Clinical; SaMD trigger)

Operational: no medical-purpose claims.

Clinical: diagnosis/triage/treatment intent → SaMD classification depends on intended use and claims made.

② Autonomy Level

Single-step assistant → tool-calling → multi-step orchestration → execution-capable workflows.

③ PHI Exposure + EHR Integration Depth

No PHI → de-identified only → PHI in runtime → EHR read-only → EHR write/execute.

④ EU AI Act Risk Posture

Minimal / limited risk → transparency obligation → Annex III high-risk → regulated product embedding.

Timeline is progressive; follow implementation guidance and harmonised standards updates in 2026.

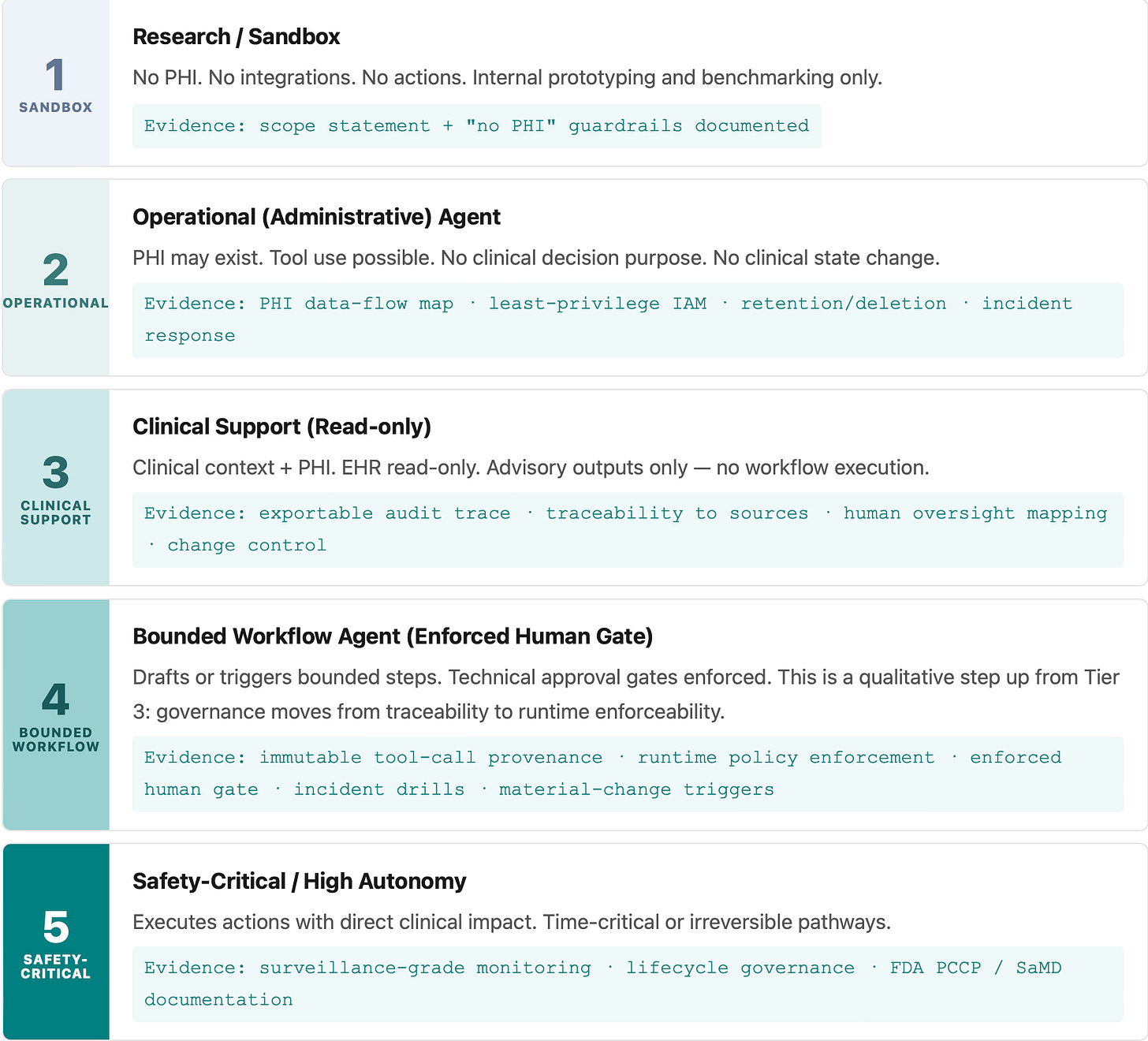

Tier 1–5: The Readiness Ladder

Each tier defines what an agent is permitted to do and what evidence must exist before it ships.

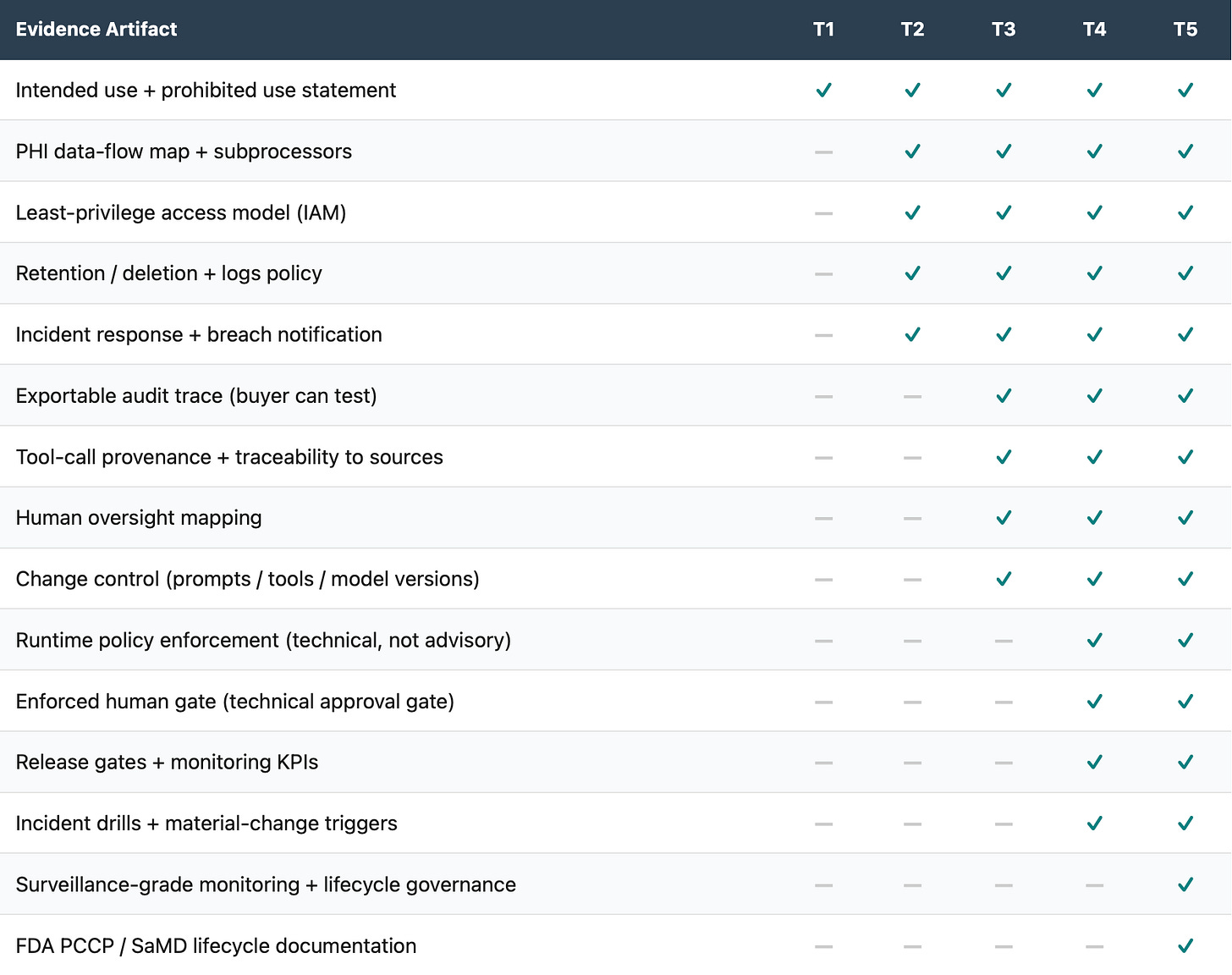

Evidence Pack by Tier

Procurement doesn’t buy your principles. It buys your evidence.

Mixed-function products must be classified per function (admin vs medical-purpose) — the evidence pack applies to the highest tier present.

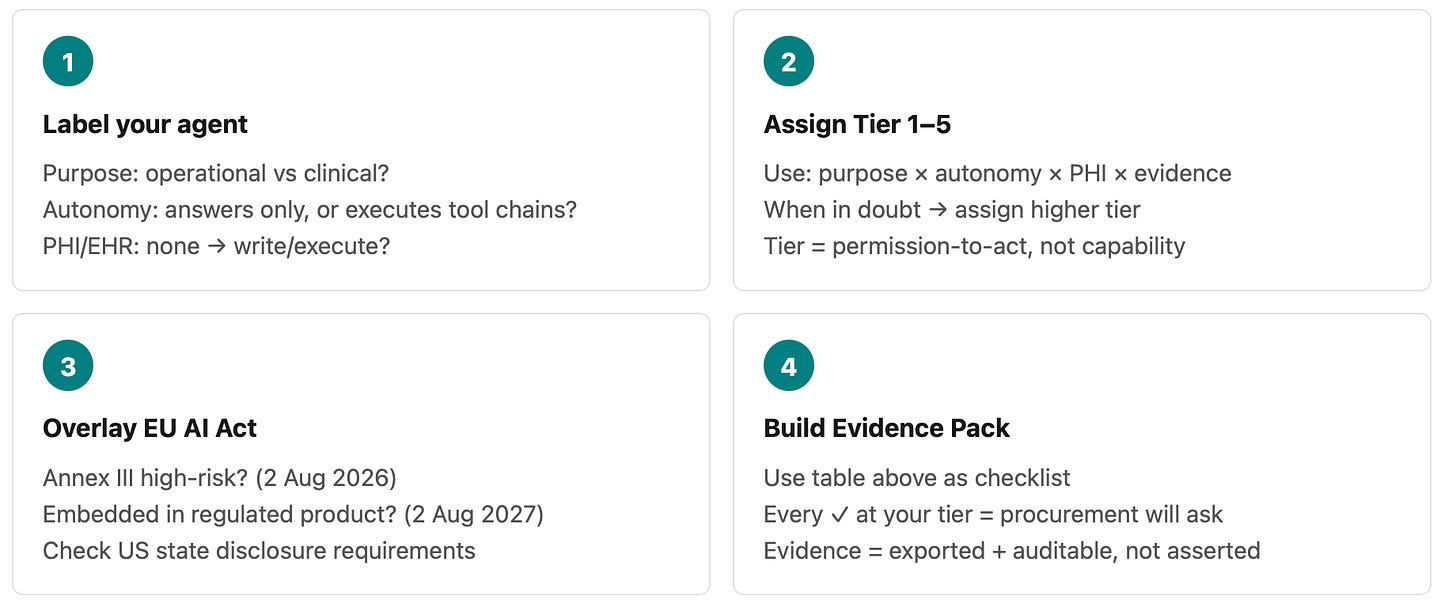

How to Use This Taxonomy (10 Minutes)

Sources

Peng, C. et al. (2026). A Comprehensive Taxonomy and Analysis of LLM-based Healthcare Agent Systems. IEEE Access.(Regulatory & Compliance constraints analysis; “compliance must be evidenced, not asserted” framing.)

EU AI Act (Regulation (EU) 2024/1689), Annex III; Articles 6, 43, 72. Progressive timeline: general application Aug 2026; regulated-product embedding Aug 2027.

US FDA: Clinical Decision Support guidance (21st Century Cures Act); PCCP guidance principles.

ONC HTI-1 Rule: predictive DSI definition and FAVES framework.

CA AB 3030 (effective Jan 2025) · Texas TRAIGA (effective Jan 2026) · Illinois WOPR Act (effective Aug 2025).

Suggested citation: Kushpelev, V. (2026). Healthcare AI Agent Readiness Taxonomy: Evidence-Based Gates for Safe Clinical Autonomy. H-GCL Hub, v0.1. viktoriakushpelev.com

Disclosure: This taxonomy is developed independently. No confidential information is shared. Views are my own.